- Solutions

- Resources

- Pricing

- Company

Reviews are a fickle bunch. The better you know their bias the better you can use them to help your business.

A little exercise: Say you went to Amazon to find a product, but this time all of the review content was gone. You would feel the vacuum. The isolation. How are you supposed to know if humidifier A is better than humidifier B?

Reviews are foundational to providing consumer perspectives online. And yet, due to their open-source nature, they’re not without flaws.

I was listening to the After Hours podcast by HBR and came across an early episode on reviews.

Harvard business professors Youngme Moon, Mihir Desai and Felix Oberholzer-Gee try to “make sense of online reviews,” where they land on the title of this article - useless and yet indispensable.

What caught my attention was their discussion on how reviews can simultaneously be hugely valuable and utterly frustrating. Their judgment at the time (2018) was that reviews are handled by the business world in a clumsy manner.

Equal parts vague for the reader of the review and lacking constructive feedback for the business.

For LMI #037, I’m going to go through some fundamental biases in online reviews so that the LMI community can use these realities to make better strategic decisions.

An Uphill Battle

There is limited context

Each reviewer comes to a review with a different set of life experiences.

Different interests, values, skillsets.

One’s perspective colors their reviews in ways that are not easily identifiable to the reader.

Worth considering: Responding to reviews can help add context and leads to 12% more reviews.

Quality vs. price

Understanding how a reviewer trades off quality and price is even more challenging.

Take the case of a hotel. You can have a great experience at an objectively lower-quality hotel because the lower price associated with that experience warrants lower expectations from the start.

At the same time, you can have a bad experience at an objectively high-quality hotel, possibly taking issue with something that you would let slide at a more affordable price point.

Hypothetical: Is it possible to isolate the influence of price in reviews?

How we rate differs by region

Cultural norms influence ratings.

There is good research on how net promoter scores (NPS) differ by country.

In Europe, 8/10 NPS is considered good. 9 is great and 10 is impeccable. It’s considered almost impossible to get a 10.

In Japan, it’s considered poor etiquette to rate any business too high or too low, regardless of performance. Japan has the lowest median NPS of 6.

In the US, the cultural standard is to give a very high rating if the experience is positive, only dropping ratings for exceptionally poor experiences. The US median NPS rating is 9.

Internationally, the US consistently has the highest average ratings.

Rating inflation leads to lost meaning

Uber is a great example here.

Anybody who uses the app knows that unless you have a truly problematic experience you’re expected to give a 5-star rating.

Because of this, value of the rating is wildly inflated, and as a result, it is largely without meaning.

A 4.9-5.0 rating is needed to meet expectations, anything else is below the norm. There is little room for constructive and non-punitive feedback.

Extreme cases are overly represented

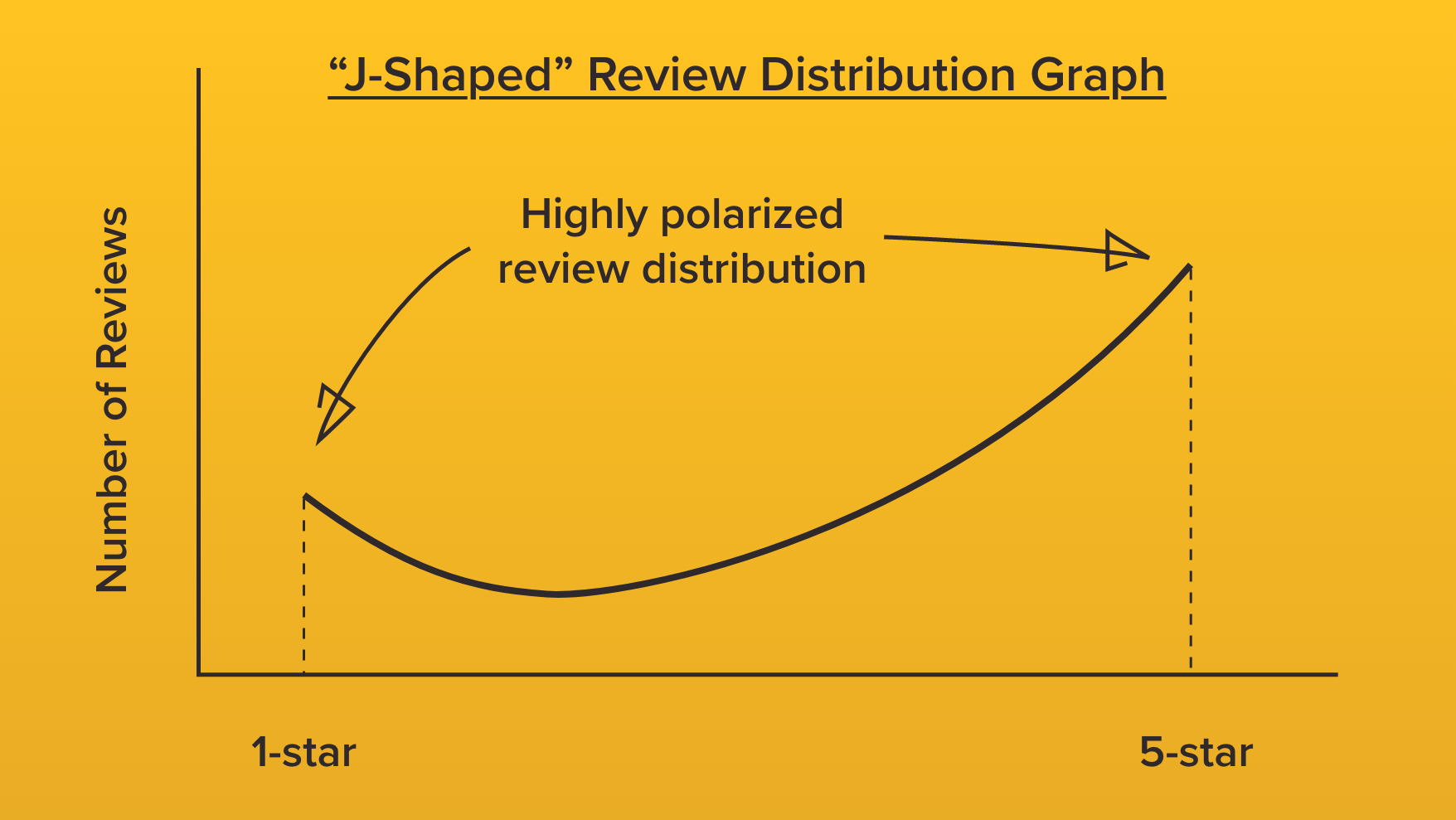

The podcast hosts touch upon a historically common issue with reviews - the distribution of opinion is highly polarized, with many extreme positive and extreme negative views, and few moderate ones.

This phenomenon is well-documented in academic studies, visualized by a “J-shaped” review distribution graph.

This outcome is driven by motivation. Those with extreme experiences will be more likely to share.

As a business, fighting this tendency will make your review content more representative of what is actually going on at your business and as a result more helpful for prospects.

Asking all of your customers for a review and making the process as easy as possible will reduce the likelihood of a polarized review distribution.

In a related study of reviews on the company review platform, Glassdoor, researchers found that pro-social incentives led to a less biased review distribution:

"Our results show that people are more likely to leave online reviews when they’re reminded that doing so helps other job seekers. Simple, pro-social incentives also led the distribution of reviews to be less biased, creating a more normal bell-curve distribution of reviews."

To the reader, we say, “best of luck”

Combining all of what I’ve outlined above, with limited input from the review sites or businesses, the reader of your reviews is left on their own to account for these biases or, more likely, to proceed unaware.

It’s not on you

Building a review system that limits these issues will likely be solved by the review platforms, not by local businesses.

But, here are the steps businesses can take to improve their review content:

Bonus: happy or not?

At scale, even the most simplistic feedback can have value.

The company HappyOrNot partners with airports across Europe, placing a simple smiley face, neutral face, or frowny face feedback system in security lines.

The result is a real-time feedback system. Lines are ranked and the current performance is displayed for each security team to see.

The ranking system motivated poorly rated security line staff to immediately respond with better service.

I’m the Director of Marketing here at Widewail, as well as a husband and new dad outside the office. I'm in Vermont by way of Boston, where I grew the CarGurus YouTube channel from 0 to 100k subscribers. I love the outdoors and hate to be hot, so I’m doing just fine in the arctic Vermont we call home. Fun fact: I met my wife on the shuttle bus at Baltimore airport. Thanks for reading Widewail’s content!

Bite-sized, to-the-point, trend-driven local marketing stories and tactics.

U3GM Blog Post Comments